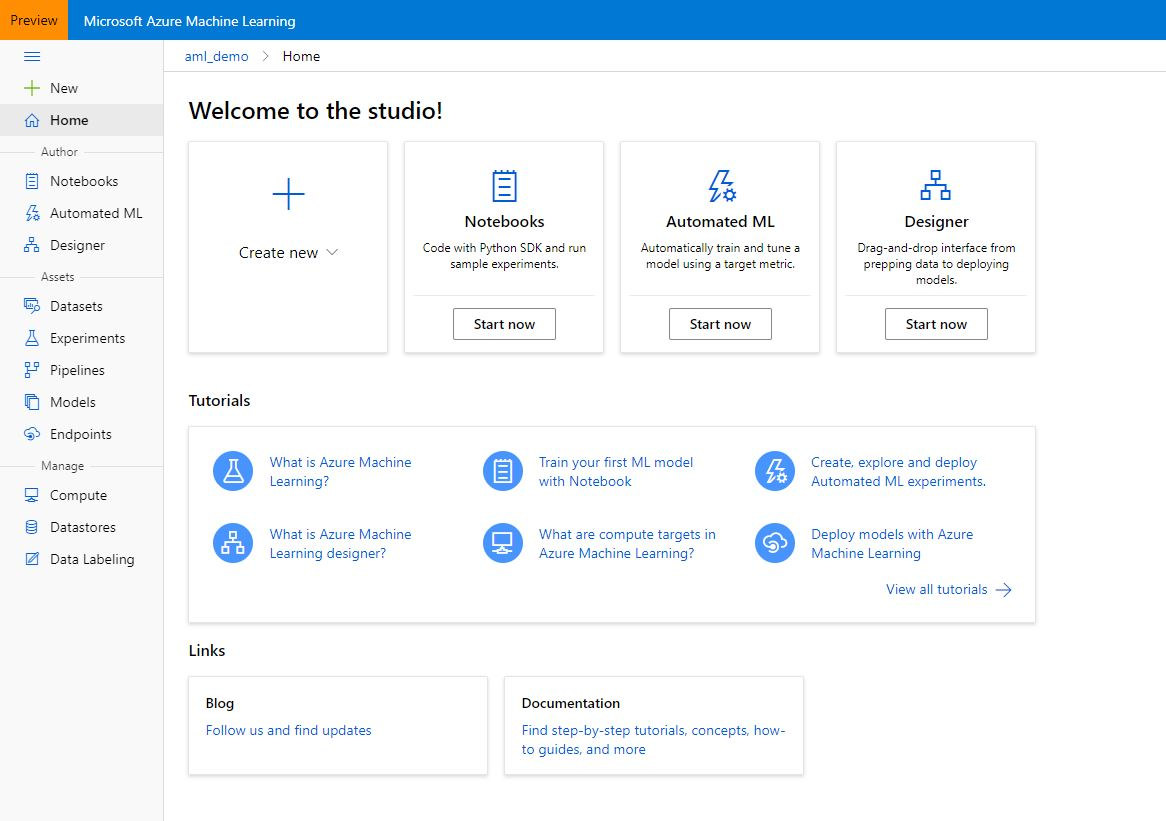

If you are a Data Scientist, you might have experienced the struggle to properly manage all the different services (e.g. storage accounts, compute environments) that are needed to support your full machine learning (ML) development cycle. Similarly, it’s tough to manage your machine learning versions, your runs and a smooth, efficient ML model deployment. This is where Azure Machine Learning kicks in: an Azure service which allows you to manage your end-to-end Machine Learning process.

In this insight, we will be covering Azure Machine Learning’s three major components and discuss our view on when to use these components.

What is Azure Machine Learning?

Microsoft Azure Machine Learning (AML) is a cloud-based environment that can be used to train, deploy, manage and track machine learning models. The service aims to provide an end-to-end overview of the machine learning cycle by connecting existing resources (e.g. Azure storage accounts and Azure computes) into one single workspace. As such, interaction with these resources is facilitated, allowing data scientists to focus on the actual development of ML models, rather than software engineering problems.

The Azure Machine Learning workspace allows data scientists and data engineers to efficiently work together on ML projects, currently offering three ways to develop ML models:

- The Automated ML component which allows to automatically train a model based on a target metric by simply ingesting a dataset and the ML task to be executed (e.g. classification).

- The Designer which allows users to visually build ML workflows by dragging and dropping pre-made ML tasks into a canvas and connecting them.

- The SDKs (Python and R) which allow to build and run ML workflows from your favorite IDE or Azure ML’s built-in notebook functionality.

click to enlarge

Introducing some important Azure ML concepts

Azure Machine Learning revolves around the concept of creating end-to-end machine learning pipelines and experiments.

- Pipelines: Pipelines are independently executable workflows of complete ML tasks. Subtasks are encapsulated as a series of steps within this pipeline, covering whatever content the user wants to execute. Pipelines can be created by using the SDK for Python or the Designer functionality.

- Experiments: Whenever you run a pipeline, the outputs will be submitted to an experiment in the Azure ML workspace. These experiments bundle runs together in one interface, which makes it easier to compare the results of your ML model development.

Additionally, it allows these pipelines and experiments to freely interact with services that are attached to the workspace. These services include storage or compute environments:

- Dataset registry: The dataset registry can be used to link existing data storage services to your Azure ML workspace. You data can reside in an Azure storage account, an Azure data warehouse, database or your local file system (from where data can directly be uploaded). Once data connections are registered in the dataset registry, data can be used in your Designer, Auto ML and SDK projects.

- Model registry: This registry will be used to store and version trained models that you have saved. These models can later be deployed as a Container Instance or on a Kubernetes server.

- Endpoints: The endpoints tab provides the user with an overview of all deployed API endpoints of the deployed models and their status/health. Deployments can be done on Azure compute resources such as Azure Kubernetes Service (AKS).

Now that we are familiar with the basic concepts of Azure ML pipelines and experiments, let’s dive into the three components.

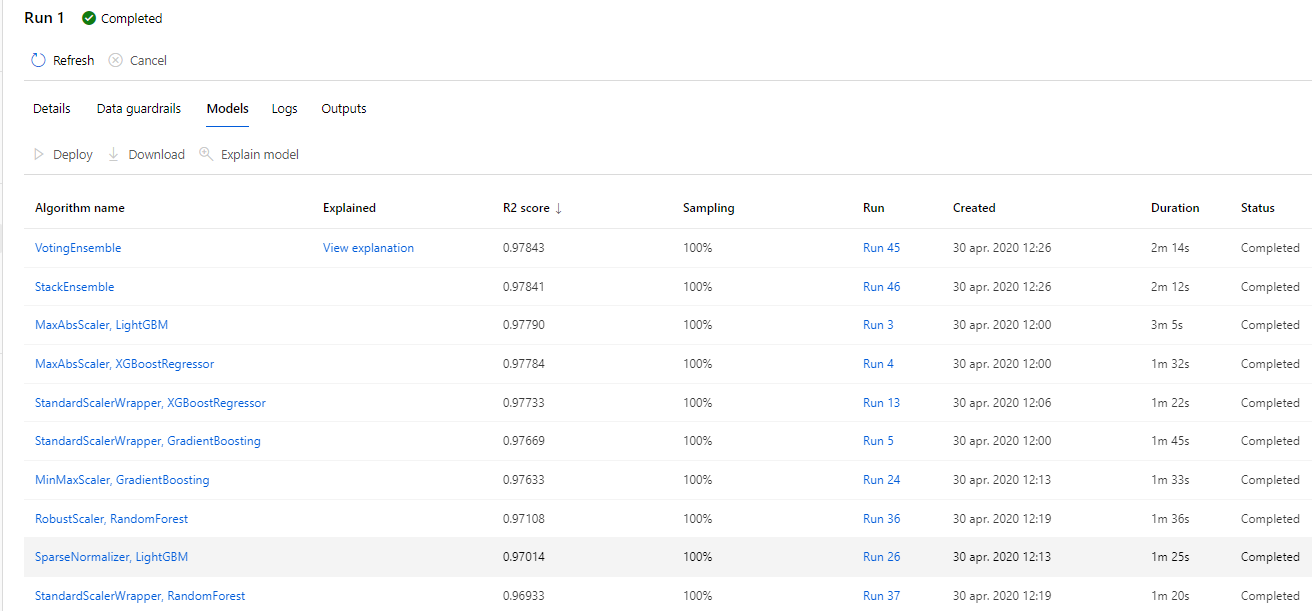

What is Azure Auto ML?

The automated ML functionality allows users to save time on the cumbersome process of training and tuning ML models. By simply passing a dataset, a target metric and the ML task to be executed, Azure ML will start generating high-performance models. Auto ML can be used for classification, regression and time-series forecasting tasks.

click to enlarge

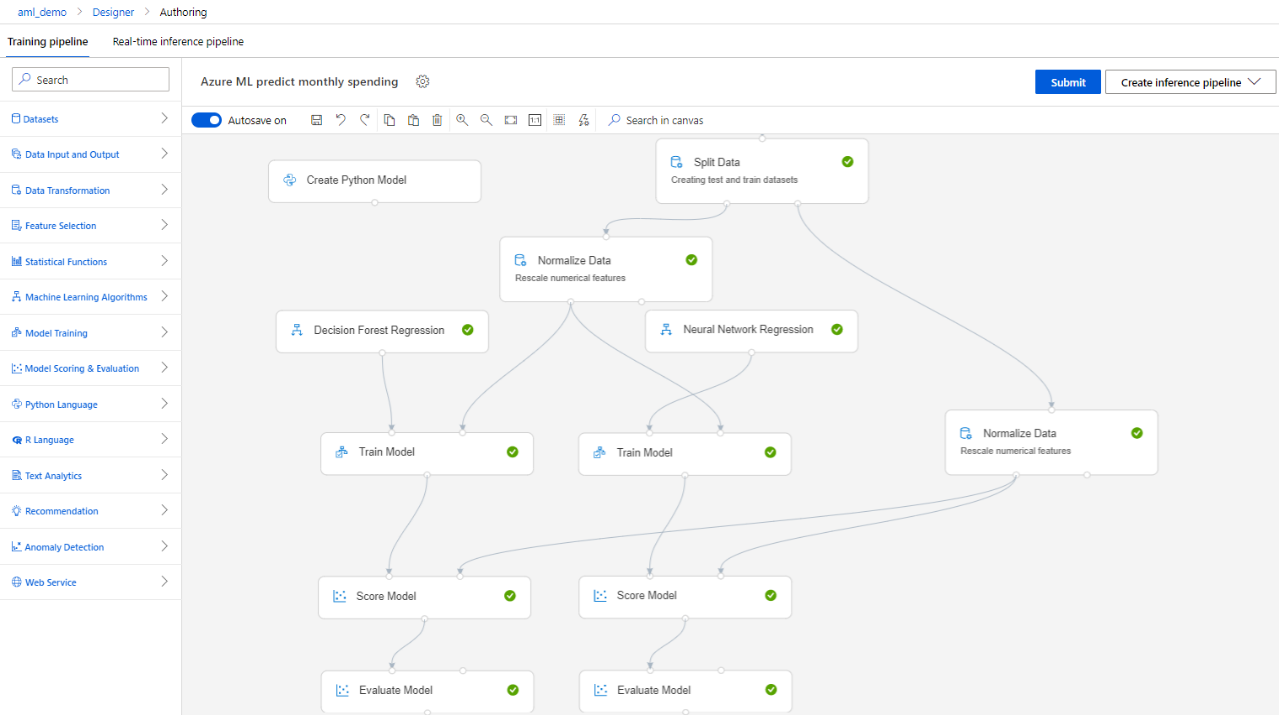

What is Azure ML Designer?

The Designer provides a user-friendly interface for users to build and test machine learning pipelines. These pipelines can be created by dragging prebuilt machine learning modules into the interface and connecting them to form a workflow. This means that people can create end-to-end machine learning pipelines, train models and deploy them without writing a single line of code.

The existing prebuilt modules cover most of the machine learning tasks that you would want to execute during the various phases of the machine learning development cycle (e.g. data preparation, model training). However, the Designer also allows users to create their own modules in R or Python. This increased flexibility comes in handy when working on real-life machine learning projects that require data scientists to, for example, perform advanced transformations on data.

click to enlarge

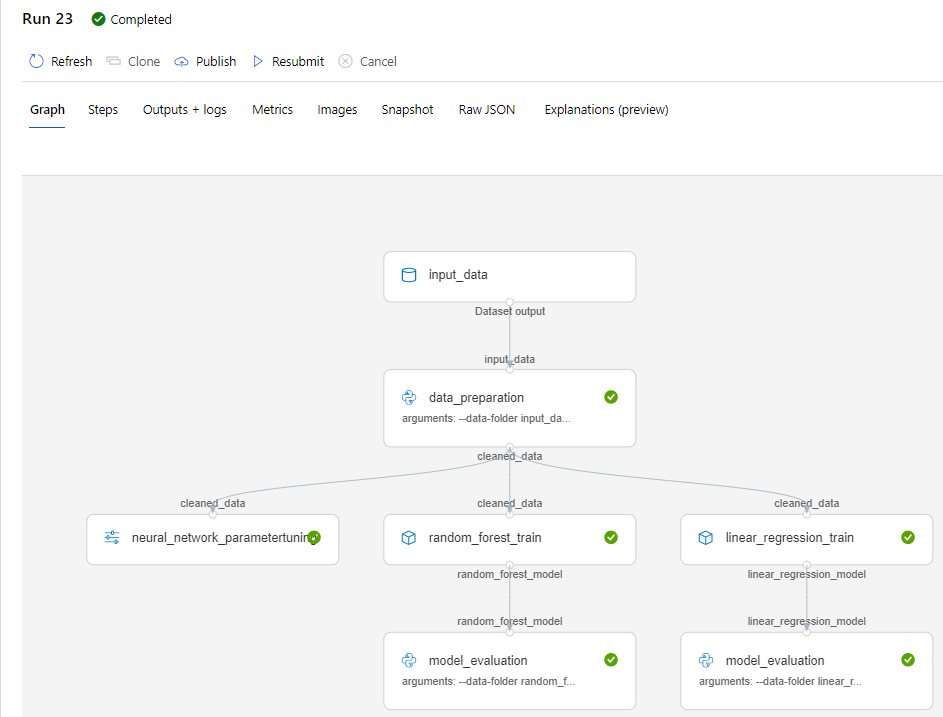

What is the Azure ML (Python) SDK?

Data scientists can use the SDKs to build and run ML pipelines. Key steps include:

- Managing supporting resources, such as computes and storage accounts, for organizing your ML experiments.

- Defining and configuring the pipeline steps by attaching Python scripts or notebooks that include the ML tasks you want to execute.

- Running and submitting your pipeline (locally or on one of the attached computes).

- Exploring the results in the workspace by checking logged metrics, parameters and artifacts.

- Saving your best model and preparing it for deployment.

In our example, we use the Python SDK.

click to enlarge

Note that behind every block in the graph there is a .py-file which we have build and inserted into Azure ML through the Python SDK. Below the script to call the Python SDK for our data_preparation step with our data_prep.py file:

## Create pipeline steps

### Datapreparation step

run_config1 = RunConfiguration()

run_config1.environment.python.conda_dependencies = CondaDependencies.create(conda_packages=['scikit-learn'])

step1 = PythonScriptStep(name = "data_preparation",

script_name = "data_prep.py",

arguments = ["--data-folder", input_data,

"--output-folder", output_data1],

inputs = [input_data],

outputs = [output_data1],

runconfig = run_config1,

compute_target = compute_target,

source_directory = os.path.join(script_folder, 'data_prep'),

allow_reuse = True

)

When to use which components in Azure Machine Learning?

Auto ML:

In our experience, the Auto ML component can be used in the following scenarios:

- If you want to implement machine learning solutions without having a programming or data science background.

- If you want to save time and resources that are otherwise spent on the manual development of machine learning models.

- To perform exploratory research on what models may suit your data. These results can then guide you into what direction to go next when you ultimately build the model yourself (POC).

- To execute classification, regression or forecasting tasks on smaller datasets as the component’s computational time does not scale well when working with big data.

- When working with clean data. Note that the Auto ML component has a built-in data cleaning feature. However, we recommend ingesting data that has been cleaned beforehand to maintain control over this process.

Azure ML Designer:

We recommend using the Designer in the following situations

- When working on machine learning solutions without having a programming background (in this case, data science knowledge is required).

- If you want to work in a visually attractive and user-friendly interface while remaining control over what happens in the ML process.

- When working with smaller datasets, as computational time increases significantly when big data is ingested. The Designer cannot use external computes to run its pipelines, meaning that the power of Apache Spark cannot be leveraged.

- If you are a professional data scientist and you want to use the Designer as a rapid prototyping tool.

Azure ML SDKs:

Lastly, using the Python SDK is especially useful in these cases:

- If you want to have full control over the entire ML development cycle and you want to design it specifically for the ML problem you are tackling.

- When working on real-life, large-scale ML solutions that require efficient computation. Python pipelines can run on a Databricks compute, allowing data scientists to process big data more efficiently.

- If you want flexibility in choosing which algorithms you use to train your models. The SDK pipelines can be used with any open source Python library that is currently available.

- If you want to log metrics, parameters and artifacts generated during the pipeline execution (this feature is not supported in the Designer/Auto ML component)

Our expertise

With our team of data engineering & data science experts, element61 has solid experience in setting up an Azure Modern Data Platform in which Azure Machine Learning takes up the role as ML Workbench.

Continue reading or contact us to get started:

Looking forward to connect!

Do not hesitate to contact us for more information.