Azure Batch is a compute management platform from Azure that allows for large-scale parallel batch workloads to be run in the cloud. Azure Batch has made the provisioning of many scalable high-performance resources easy and affordable to end-users.

When should we use Azure Batch

Azure Batch is designed to run general purpose batch computing in the cloud across many nodes that can scale based on the workload being executed. It’s a perfect fit for ETL or AI use cases where multiple tasks can be executed in parallel independent from each other.

Use cases can thus include:

- Engineering simulations – e.g. running simulations for each machine in parallel

- Deep learning and Monte Carlo simulations – e.g. running models with different multiple parameters looking for the best performance

- ETL – e.g. running a transformation task in parallel

- Image processing and rendering

- And many more.

It’s key to understand that there is a difference between Azure Batch and Azure HDInsight. Most importantly, Azure HDInsight provides a cluster where Azure Batch provides multiple nodes who allow parallelization yet don’t provide a cluster by default. An in-depth insight on the differences can be read here: How to parallelize your AI scripts using Azure?

How does Azure Batch work

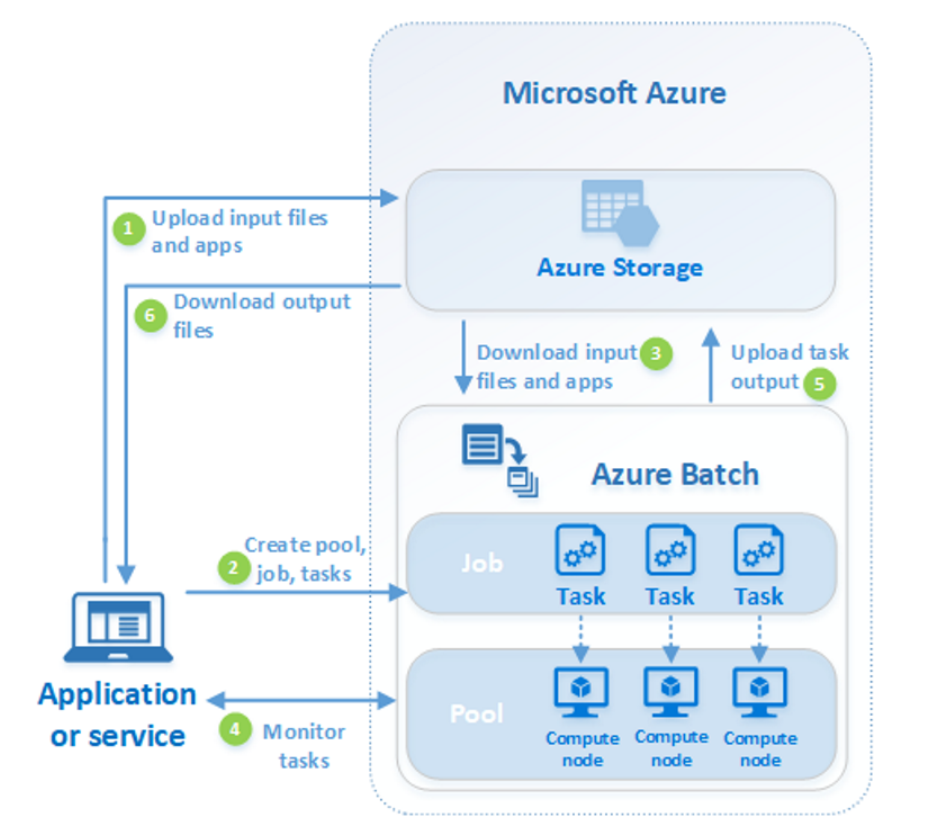

Azure Batch is a non-visual tool meaning there is no actual user interface. Once you have initiated Azure Batch component in Azure, you have the ability to create compute pools.

- Firstly, Azure Batch will assume there is data somewhere it needs to crunch. Typically this data will reside in Azure Blob Storage or Azure Data Lake Store. Your first tasks is thus to make sure your data is uploaded in these storage folders.

- Once done, we can create a compute pool; this is a pool of one or more compute nodes on which you will jointly assign jobs. In setting up the compute pool you will be asked the name of the pool and what type of nodes the pool should contain (which OS, which software installed, linked to which Azure Storage, etc.).

- All compute nodes within a compute pool are thus identical. They are Azure VMs which are configured with your specifications

- They can be Linux or Windows nodes

- The can be mounted with a Azure VM, custom image or docker image setup

- They can be dedicated or low-priority nodes

Once we have a compute pool set up and nodes set up, we can assign work to it. Work will be in the format of Jobs and Tasks:

- A Job is a collection of tasks; tasks which by itself will be parallellized.

Example of jobs can be: run 10 different simulations of this model, run 1000 iterations of a transformation scripts (for which each transformation is a task). - A Task is an individual run of the job. In above example, it would be 1 run of the simulation, 1 run of the ETL or 1 run of the AI job. Each run might include:

- Downloading input files from Azure Storage – e.g. csv’s or parquet files

- Running a transformation or AI scripts – e.g. in Python or R

- Uploading results back to Azure Storage

Once jobs are submitted, Azure Batch will allocate dynamically tasks to the different nodes. Each node can absorb one or multiple tasks (depending on the number of cores the VM has). All together, once all tasks are finished, the job will be marked as complete and the compute nodes will be ready to run another job.

A visual representation of how to work with Azure Batch

How to work with Azure Batch

Azure Batch needs an application or service manager which creates pools, assign tasks and monitors if needed. This service manager can be the portal interface, yet we recommend using the available packages in Python or R. In practice you will script the creation of the pool, tasks and jobs within this Python (or R) script.

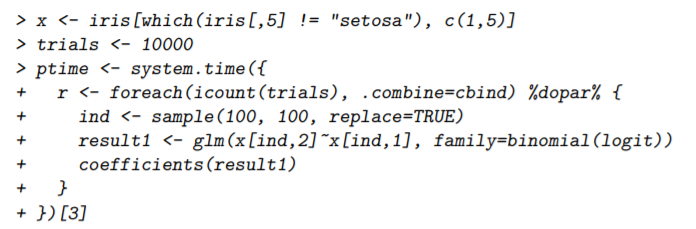

The DoParallel package is an amazing package within R which allows you to assign tasks to Azure Batch by simply running a foreach-loop. The R-script within the foreach-loop will be executed directly in the Azure Batch nodes and results will be merged together automatically and delivered back to the R user.

The Azure Batch package within Python is similarly powerful yet works differently. Through this package you can easily use python to create cluster, add jobs and tasks. It’s however up to the user to merge the results.

What are important features of Azure Batch

There are several distinctive features of Azure Batch which make it stand-out vs. alternative products. Allow us to list the most important features of Azure Batch

- It gives you the ability to run large-scale parallel workloads at very low cost through the use of low-priority VMs.

- It allows you to fully configure the nodes yourselves given it support Docker-configuration

- It allows you to create pools and run jobs through an easy-to-use code interface with R (through DoParallel and Python)

- It can auto-scale thus providing more nodes when needed. For this, it uses a formula, e.g increase the number of computing nodes if more than X tasks are queued

- It allows you to monitor interactively your jobs with Application Insights or Batch Explorer.

- It allows you to run any type of node: GPU instances, Linux or Windows nodes, Dockers.

- It can integrate easily with Blob storage and Data Lake storage to fetch data for each task

Our expertise

element61 has used Azure Batch as a computing platform when challenged to perform large batch processes in the cloud for various use cases. Our team has the experience to help to define whether Azure Batch is a good solution and if needed to implement it end to end. element61 has the experience of using Azure Batch in both R and Python.

Conclusion

Azure Batch is a computing platform for running massive parallel workloads in the cloud. The limitations of the computation power of on-premise resources, or the expensive infrastructure you need to build to run extensive workloads can be solved with Azure Batch. Using multiple computing nodes to execute tasks in parallel leads to quicker and more efficient executions of your jobs while paying only for what you need.

More information is available at the Microsoft website: Azure Batch documentation.

Contact us for more information on Azure Batch!