Introduction

Early April 2012, Microsoft officially released SQL Server 2012. With respect to Business Intelligence & Data Warehousing, this version offers some interesting new functionality, of which this Insight reviews the highlights.

In the data integration area, Microsoft has made a step forward with the introduction of two components: Master Data Services and Data Quality Services. They re-enforce and broaden the current offering of Integration Services, Microsoft’s ETL (Extraction, Transformation & Loading) tool. In the new version of SSIS, Microsoft has made important enhancements to increase the productivity for developing and managing SSIS Packages.

On the data management side, with the Index Columnstore on the database and the Tabular Model in Analysis Services, Microsoft has introduced a new technology Xvelocity™ (previously known as Vertipaq™) that increases the performance of ad-hoc queries.

On the end-user side of BI project, Microsoft has made major innovations to render the BI platform more agile than ever with PowerPivot and Power View. End users have the possibility to create BI applications themselves with PowerPivot and create reports with Power View. These reports can be shared and deployed in SharePoint where colleagues can access them. They can even be imported in and updated from within PowerPoint.

In this Insight, we will highlight the new functionalities that we think are important for Microsoft Business Intelligence customers and professionals and comment on how we could use them in real life. Finally, it is not the goal of this article to review all the new functionalities of SQL Server 2012, we focus on the once relevant for BI & data warehousing.

Data Integration Area

Master Data Services

Master Data Services is Microsoft’s answer to the Master Data Management challenge. Master Data Management (MDM) is a set of tools and processes that aim to deliver a single clean and consistent view of each master data entity (product, customer, employee, financial account, …) that exists within the organization.

Master Data Services (MDS) was already present in SQL Server2008 R2 and was the result of the integration of the acquired technology of Stratature into SQL Server.

With SQL Server 2012 we get a more robust and mature solution because of the progress made both on usability via a new MS-Excel Add-in and on the performance of the product with enhancements in the Business Rules modules.

Figure 1:

Web GUI – Master Data Services

The new MS-Excel Add-in will allow data stewards, business users responsible for a particular data set, to edit members of an entity leveraging all the familiar functionality of Excel. New entities can be created either directly from Excel or imported from an existing table in SQL Server 2012 MDS. More complex tasks linked to the management of the model and the definition of business rules are done via a web interface.

Figure 2:

MS- Excel Add-in Master Data Services

Data Quality Services

Data Quality Services (DQS) is a brand new feature in SQL Server 2012. In short, DQS allows to check the quality of your data and clean it. It enables you to build a Data Quality knowledge base, and use that knowledge base to perform a variety of critical data quality tasks such as correction, enrichment, standardization and de-duplication. DQS enables a data steward or IT professional to maintain the quality of the company data.

Figure 3:

Data Quality Services GUI

DQS comes with a user interface where users can manage their "knowledge base” by creating domains and attaching rules to the domain. Typical rules are :

- Check data type

- Check format (like e-mail)

- Provide synonyms to avoid duplication of data

- Provide Term based where a code can be transformed into a long description (eg. US can become United States)

- Fuzzy Lookup with an external data set

Figure 4:

Editing rules in the knowledge Base of Data Quality Services

In a second step, you can apply your "knowledge base” to a source of data. You will have to map the source columns with the domains that you have defined in your "knowledge base”. You can then execute the rules on the data and analyze the results. While analyzing the results, you have the possibility to accept or reject corrections made by the DQS engine. Eventually, you can export the cleansed data to any data source you want.

As a result of your first iteration of the Data Quality project, you will get a Data Quality knowledge base. This knowledge base can then be used within SSIS via the "DQS” data flow task to automatically clean your data, eg. prior to loading in the data warehouse.

Figure 5: Using the "DQS” data flow task within a SSIS package

In a BI environment, the quality of the data is a key factor of success because wrong data leads to wrong decisions. The development cost of a solution is inversely proportional to the quality of your data. Until now, a tool that allows capturing and sharing knowledge in a continuous process between business and IT people had been missing in the Microsoft BI stack. With this new solution, the possibility exists for our BI projects to move data quality rules previously defined in SSIS to a central location where they can be managed by business people and re-used between multiple projects.

Integration Services

There are a lot of enhancements that make SSIS a better ETL-tool. The main concepts remain the same: Connection, Task, Data flow. However an important effort has been made to improve the productivity of developers, the ease of management and deployment of a SSIS solution.

Development Interface

- BIDS has been replaced by SQL Server Data Tools. A new development interface with the look and feel of Visual Studio 2010.

- "Undo” feature has been added

- Improvement of copy/paste functionality

- Improved re-mapping of columns

- New possibilities to arrange SSIS toolbox (new categories, favorites, etc.)

- Possibility to add a carriage return in annotations

- Addition to mention "Fx” on the icon of the components using expressions.

- Possibility to debug a data flow script task

- The XML code has been reviewed and shortened. The readability of the XML code has been much improved and we can "merge” packages. It is now possible to work with multiple developers on the same DTSX file.

Figure 6: SQL Server Data Tools

New Components/features

- New Change Data Capture components integrated in the Data flow task component that simplifies the use of the CDC component. You also have the possibility to use the CDC on top of ORACLE database.

- New DQS components that can be used to cleanse data

- New functions that can be used in the expression: LEFT, TOKEN, TOKENCOUNT and REPLACENULL

- Support for a varying number of columns in flat files and embedded qualifiers

SSIS Management

The management of SSIS has been enhanced in SQL Server 2012. We now have a real Integration server that is capable of supporting multiple instances. There is a new database called "SSISDB” that contains all the packages and all the metadata to execute the packages as well as the logging of all the executions.

Figure 7: Integration Services Instance

In SSIS 2008 there was no direct relation between the packages at the server side and we had to rely on a configuration file or parent package variables to use consistent variables within all the packages.

With SSIS 2012, the packages belong to a project and all variables that need to be shared can be defined directly at the project level, and the same is true for the connection strings. Furthermore, the concept of environment has been introduced. An environment is a collection of variables with values attached to it. These variables can be referenced at execution time.

Logging has also changed in SSIS 2012. Now everything is automatically logged into the "SSISDB” database: Execution time, Execution duration, number of errors, errors messages, number of row sent, etc. On top of this metadata there are built-in integration dashboards, displaying the most important statistics. You also have management views at your disposal in which all the details are logged. With regards to performance monitoring, this new solution will reduce the need for custom logging which was required with SSIS 2008.

Figure 8: SSIS Execution Dashboard

Migration of SSIS 2008 Packages

All these new features are very promising but what will happen to the packages that were developed with SSIS 2008? There is still the possibility to run them in legacy mode so there are no complications here.

If you want to migrate to SSIS 2012, there is a migration wizard that will help you to convert your packages. The migration wizard will:

- convert your existing config files into project variables,

- analyze your parent packages variables to define them at the project level as well.

The wizard will not be able to convert expressions automatically into project variables. This is something you will have to do yourself in SQL Server Data Tools.

Data Management

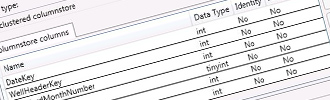

Columnstore Index

At the database side, there is one major innovation that will be very interesting for all your BI projects: the columnstore index. A "columnstore” index stores each column in a separate set of disk pages, rather than storing multiple rows per page which is the way data is stored traditionally.

Benefits of this are :

- Only the columns needed to solve a query are fetched from disk (this is often less than 15% of the columns in a typical fact table).

- It is easier to compress the data due to the redundancy of data within a column.

- Buffer cache hit rates are improved because data is highly compressed, and frequently accessed parts of commonly used columns remain in memory, whereas infrequently used parts are paged out.

- On average this technology increases query performance by a factor 10 compared to traditional row based systems.

Figure 9: Create a Columnstore Index in SQL Server 2012

There is one limitation with columnstore indexes: you cannot directly update tables with index "columnstore” using INSERT, UPDATE, DELETE, and MERGE statements, or bulk load operations. To load data into a table with columnstore index, you need to disable the index before you load the data and then the index needs to be rebuild. This can be quite a long task if you have very large tables. You can use "switch partition” to overcome this problem and disable the index columnstore only on the new partition that you have just created.

We believe that "columnstore” index will be of great use in a Data Warehouse environment. It will reduce the time spent on query performance optimization and the need to create additional aggregate tables. This function will probably create a new interest in relational reporting solutions and it will also boost the performance and usage of the lower layers of your Data warehouse.

We can only regret that the columnstore index option is not part of the SQL Server Business Intelligence edition (in terms of licensing), even though it will be intensively used for Business Intelligence applications. You will have to buy the Enterprise License of SQL Server 2012 to benefit from the columnstore index-functionality. This is most probably also proof of how much Microsoft thinks this functionality is worth going forward.

Analysis Services

Microsoft has released a new product within Analysis Services 2012: The Tabular Model. It is a server version of the current "personal BI” solution PowerPivot. With this new product and the new reporting solution "Power View” that can report on top of the Tabular Model, Microsoft is trying to compete with the pure "in-memory” players.

Microsoft did not abandon the disk-based OLAP technology obviously. Therefore in Analysis Services 2012, we will find two different products: Tabular Model and Multidimensional Model. Both products can co-exist on the same machine but they have their own instances. For an in-depth review of this topic please see this Insight : "The choice between Tabular or Multidimensional models in SQL Server Analysis Services 2012”.

Tabular model

Tabular models are in-memory databases in Analysis Services. Using state-of-the-art compression algorithms and multi-threaded query processing, the Xvelocity™ engine delivers fast access to tabular model objects and data through reporting client applications such as Microsoft Excel and Microsoft Power View.

The main advantages of the Tabular model are:

- It is performant: in most of the cases, it will be faster than the Multidimensional model

- You can directly import a PowerPivot model

- It is easier to design because DAX language is very similar to Excel formulas and DAX concepts are similar to relational database concepts

- It is the only source of data that you can use with Power View

Even though there are a lot of exciting functionalities that come with the Tabular Model, there are still some limitations: in terms of handling large data volumes, you are limited with your RAM memory, and in terms of complexity of modeling where in some circumstances the Multidimensional model might be the best choice.

Figure 10:

Development Interface of the Tabular model

While Power View can only use the Tabular model as a source, any other reporting tool that can generate MDX like Microsoft Excel, Reporting Services, etc. can connect to a Tabular model. This is made possible because the MDX queries can be translated into DAX queries by the Tabular model engine.

Reporting Services

Open XML

The Reporting Services Excel rendering extension, new in SQL Server 2012, renders a report as an Excel document that is compatible with Microsoft Excel 2007-2010 as well as Microsoft Excel 2003 with the Microsoft Office Compatibility Pack for Word, Excel, and PowerPoint installed. The format is Office Open XML and the file extension is XLSX.

SharePoint integration

Reporting Services is now completely integrated with SharePoint 2010. You will manage reporting services entirely through SharePoint. Report viewer has now become a true service application. Furthermore, Reporting Services is now delivering the same performance in "SharePoint integrated" mode than in "native” mode. Thanks to its complete integration with SharePoint, you can:

- Save reports directly in SharePoint libraries

- Within web pages in SharePoint manage subscriptions and schedules of reports execution

- Add report viewer web part in any pages or dashboards exiting in SharePoint and personalize its appearance

- Re-use security roles defined in SharePoint to grant access to reports

Figure 11: Reporting Services in integrated mode with SharePoint

Power View

Power View provides intuitive ad-hoc reporting for end-users. They can easily create and interact with views of data from Tabular models or PowerPivot in SharePoint. Power View is a browser-based Silverlight application launched from SharePoint Server 2010.

Figure 12: Create a report with Power View

The simplicity of use and the capability to efficiently create dynamic reports that remain dynamic once exported to Power Point, will make Power View a very appealing tool for Business Analysts.

Figure 13:

Power View reports exported in PowerPoint

Power View does not replace Report Builder, the report authoring tool for richly designed operational reports. Power View addresses the need for Web-based, ad hoc reporting. You can only access Power View through SharePoint.

Alerting

This new function gives end-users the possibility to subscribe not to a report but to the data within a report. The end-user can now define his alerting rules and receive a mail each time that the alerting rules criteria are met, based on a schedule. The alerting is built on top of the existing data feed format of SSRS. This function is managed and is only available when Reporting Services is integrated with SharePoint.

Figure 14:

Editing Alerting rules with Reporting Services 2012

This is a powerful function to notify only relevant data changes to your users. We see a great potential for this functionality in the operational BI applications where users want to be warned as soon as possible when something goes wrong or off track.

Conclusion

With SQL Server 2012, Microsoft proposes a unified BI vision between personal and corporate BI with considerable evolutions on the axes of performance, data management and self-service BI.

We understand the path proposed by Microsoft which is to create footbridges between Business and IT by allowing end-users to experiment with the new solution on their own via Power Pivot and to involve IT later on once it has reached sufficient maturity. With the release of Tabular model and Power View, Microsoft complements the traditional report-centric architectures with interactive visualization tools using fast in-memory technology. This is a first credible response from traditional BI vendors to the growing interest of BI users in "Agile BI”.

Based on our experience with the Denali and RCO version, we currently do not see any technical reasons not to choose SQL Server 2012 for any new Business Intelligence Project. Despite being a first release, the extensive and broad beta-testing program has resulted in a stable product release from Day 1.

One slight note of caution, one can regret that many of the new BI functionalities require the Enterprise Edition of Microsoft SharePoint and/or Enterprise Edition of SQL Server. This might prevent enterprises with limited IT budget to benefit from all these new tools, but these editions still offer excellent Value for Money compared to some more expensive BI technologies around.