Organization

Meat&More is a fast-growing company in the food industry specialising in meat and ready-to-eat products. Meat&More works end-to-end and is active from production to retail-sales. They have two commercial channels:

- Buurtslagers which provides retailers such as Smatch, Carrefour, Lidl and Delhaize with a meat and ready-to-eat offering within their stores

- Bon'Ap, a Meat&More store concept, offering various meat and ready-to-eat products including but not limited to meat.

Challenge

With thousands of products and hundreds of stores, Meat&More set up a Supply Chain 2020 program aimed at making sure the right product is in the right quantity in the right store. Not only would this support financial return through less food waste, time saved and recovery of lost sales but this would strengthen client satisfaction as well through less stock-outs, increased freshness and environmental responsability.

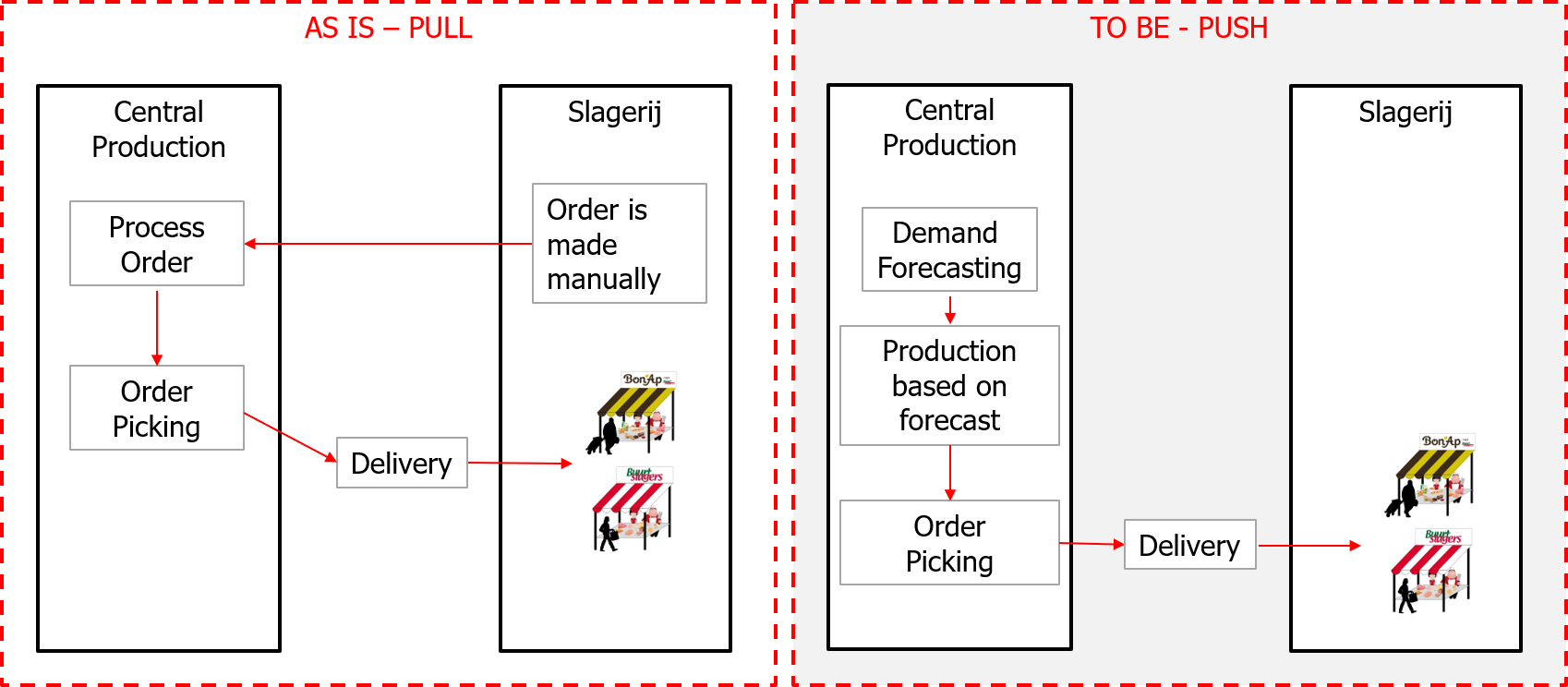

The objective was to go from a pull-model to a push model:

- In the existing pull-model orders are made just-in-time by the store responsible, made or picked-to-order and shipped to the stores

- In a smart push-model expected quantities are forecasted ahead for all products and stores, production is optimally planned and products are delivered (i.e. pushed) based on this forecast.

If the forecast can smartly take into account weather, seasonality, price-sensitiveness, promotions and past sales, this push-model can result in high level of automation, optimized Supply Chain planning and better execution of 'the right product is in the right quantity in the right store'

Project approach

Meat&More worked with Moore Belgium Strategy & Operations and element61 to design and set up the Supply Chain principles and AI forecast engine. The project consisted of two phases:

- Phase 1: design

The initial phase aimed at designing the new Supply Chain set-up including a draft of new processes and roles & responsibilities.

In parallel, a Proof of Concept was built aimed at validating if AI could truly deliver an accurate forecast for sales based on past sales, promotions, weather and seasonality.

The outcome of Phase 1 showed two key insights:

- The AI algorithm was strong in forecasting sales up to 49 days ahead. With an error-rate below 5% for most products in the Proof of Concept (i.e., root-mean-square-error divided by mean sales), a push-model approach could work to optimize Supply Chain planning and save time.

- The implementation would require new tools beyond the AI set-up including an updated demand application for store managers (to monitor the forecast) and a dynamic stock calculation (taking into account current stock, forecast, product shelf life)

- Phase 2: implementation

The objective of the implementation was to set up all the data-flows and technology to deliver this Supply Chain set-up easily to 250 stores for all (i.e. thousands of) products. At this stage, scalability was key and element61 guided the client into leveraging a Cloud platform to run all data & AI computations.

After a vendor selection exercise, Meat&More selected Microsoft Azure as their trusted Cloud platform. The design of the architecture, the set up and the development was done in co-development between the element61 and Meat&More team

The solution for AI demand forecasting

Meat&More has thousands of products in hundreds of stores. In the past, demand forecasting was done manually based on traditional tools (excel) and experience. The manual process focused on the outliers given manual forecasting for all products for all stores was too time intensive.

In a push-model, an accurate demand forecast is key and the context details about products are important to be taken into account: - e.g. BBQ products have peaks in summer weekends, gourmet products are popular around holiday periods. At the same time, every store has its own specificities - e.g. products might sell well in one region yet not in another region in Belgium. As a result, it was important we were specific per product per store and it was key not to be generic: it's impossible to expect from one forecasting model to be accurate in predicting both BBQ ribs well as well as chicken soup.

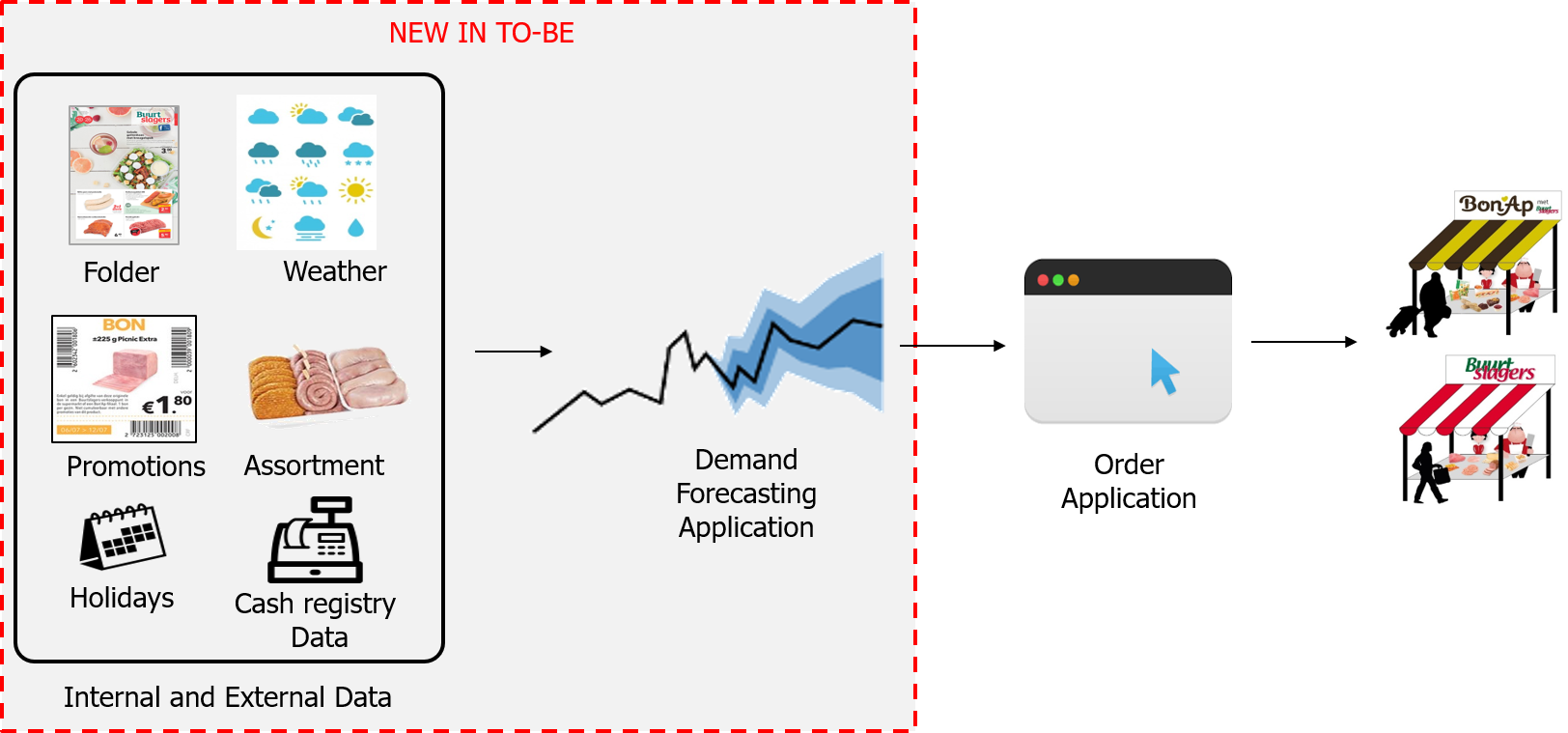

The approach was taken to build a predictive model per product and per store yet all leveraging a broad set of shared drivers including past sales, seasonality, holidays, promotions, price changes, weather, assortment changes and others.

Internal and External data is used for the AI forecast

In moving from a pull-model to a push-model, it was important for Meat&More and store responsibles to have transparency in the AI algorithm: "what is driving this forecast and why is it forecasting X.". To provide this transparency it was chosen not to work with deep learning but rather to work, in this phase, with simpler yet performant AI techniques.

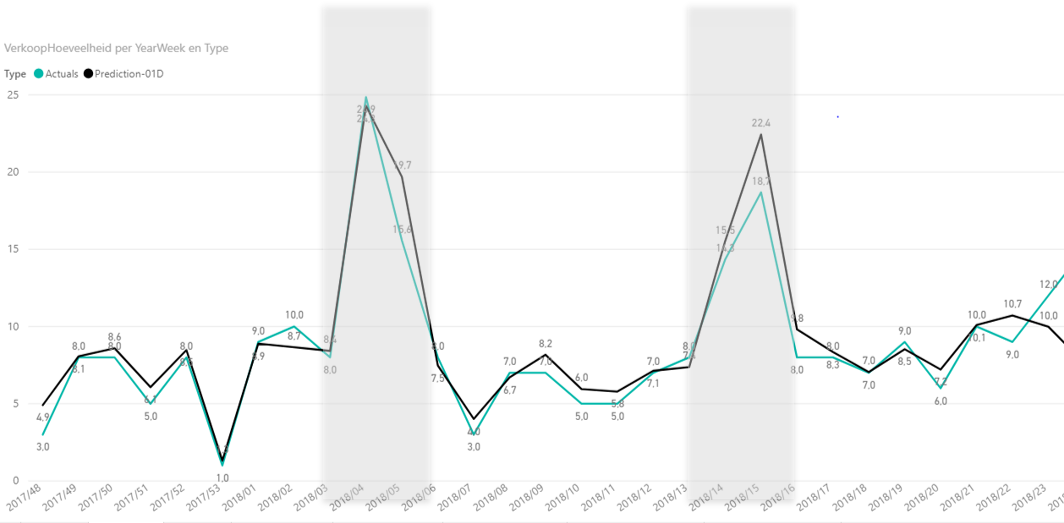

The demand forecasting was a not a typical time-series set-up. Although trends and seasonality play a role, the importance of drivers such a promotions and weather change suggested in using a driver-based regression approach. Best accuracy was realised with using a RandomForestRegressor with hyperparameter tuning and attention of removing outliers from the baseline. The accuracy realised varied per product but the overall success figure was <5% error on 60% of tested products on a cross-validated test period (i.e. RMSE divided by mean error).

The result is an AI algorithm which runs daily for every product and store and forecasts quantities up to 14 days ahead. The long-term forecast (14 days) is used for MRP & purchasing and the short-term forecast (i.e., sales for tomorrow) is used for store replenishment and delivery.

Plotting the forecast vs. actual sales for Vol-au-vent

The solution for scalability: a Hybrid Data Platform using Microsoft Azure

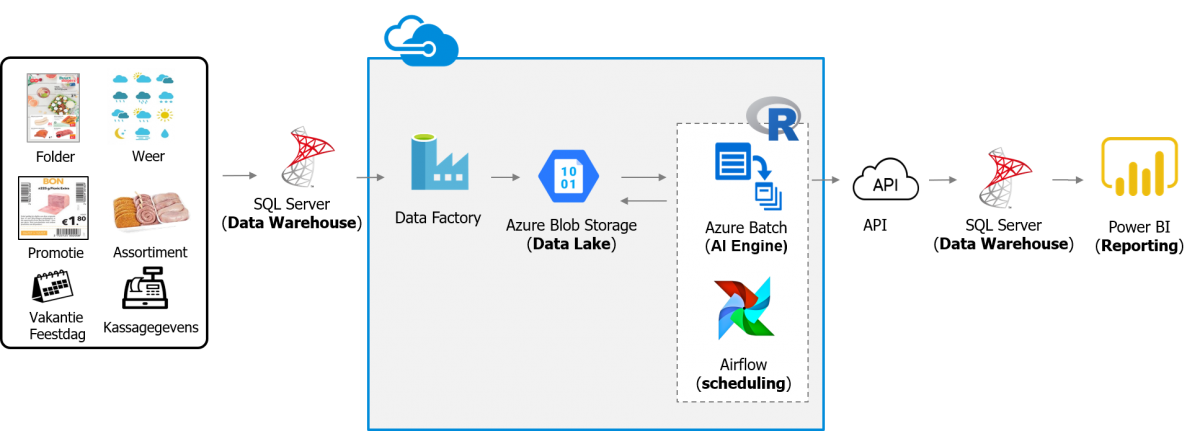

The algorithm needs data and needs compute power to run. To allow for scalability and flexibility, Meat&More decided to complement their existing BI set-up with a Data Platform in the Microsoft Azure Cloud.

Using an on-premise gateway, various datasets including transactional sales data, promotion data and weather are ingested daily into the Azure Data Lake (Blob Storage) using Azure Data Factory. Once loaded, the scheduling tool Airflow triggers the forecasting cycle where a cluster of computing nodes (up to 200 VMs) in Azure Batch are launched in parallel to train (once per week) and score (every day) the demand forecasting application.

The Data Platform supports the use of open-source tooling such as R and Spark to allow any new AI techniques in the future to be absorbed as quickly as possible. Microsoft Azure Cloud enabled this open-source focus through services such as Azure Container Registry and Azure Batch whereas by using Docker technology we had full flexibility in embedding our AI algorithm in a open-source set-up configured with best-practices.

To allow for future growth, a DevOps way-of-working was set up incl. collaborate code-development, unit-testing, continuous integration and deployment and infrastructure as code.

Data Platform Architectuur

Results

At the time of writing (May 2019), this AI demand forecasting set-up is running live and is automating the Supply Chain delivery to 5 stores for around 100 products. With positive first responses, the roll-out to more products and more stores is planned in Q3 and Q4 2019.

Additionally, Meat&More has absorbed data and AI capabilities in its organization. With a solid data platform in Microsoft Azure Cloud and a proven first AI case, further use-cases are getting defined in the area of sales, marketing and supply chain.

Coaching and co-development

This project was realised 100% through co-development and coaching. A Meat&More team of 1 Data Engineer and 1 Data Scientist where complemented with an element61 team of 1 Data Engineer and 1 Data Scientist. During the implementation period of 6 months, the time spent by the element61 team gradually reduced and the allocated time of the internal team increased taking more ownership and more development in own hands. The co-development really meant that we were explaining new Azure technologies, sharing best-practices, clarifying our approach while developing the end-to-end AI and Data solution step-by-step.

Want to know more?

- Watch the video customer case-study to learn more from this project with Meat&More

- Contact us in how to get started with AI demand forecasting