Introduction

"You can’t always get what you want. But if you try some time, you just might find, you get what you need” is the major punch line to take away from the song "You can't always get what you want” by the Rolling Stones which exactly recapitulates what agile BI is all about.

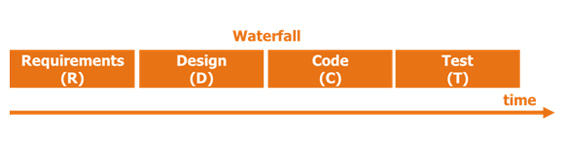

Within a sheer traditional waterfall approach everything happens in rather fixed and rather long-stretched sequence; starting off with a hopefully (?) complete set of requirements, translated in an exhaustive data model & design up front, towards a solution which is then built through the means of ETL, reports & typically cubes and then finally the whole thing is presented to the business users.

This approach may ensure that you deliver the BI system that the business users initially asked for, but it virtually guarantees that business users won’t get what they actually need.

The issues here are diverse:

- the risk of misinterpreting the requirements by the BI developers,

- the risk that requirements might have changed during the lifecycle of the project,

- the risk of incorrectly or incompletely expressed requirements,

- the risk of needless requirements,

- the risk that business users did not know exactly what they wanted or could expect from the BI solution in the first place.

In conclusion, disappointed business users which only "see” too late down the road the real impact of their -poorly expressed or interpreted- needs conjoined with the fact that all earlier stated requirements were somehow frozen in time, where in reality lots of things (read: their requirements) meanwhile might have changed.

In order to avoid these kinds of problems an evolutionary method in dealing with changing requirements captured through regular business & IT collaboration is needed.

Business versus IT

When business and IT are confronted with each other in terms of BI projects, most often different issues will pop up.

Business might say things about IT such as:

- Why does it always take so much time for IT to deliver something?

- The solutions built by IT always seem so overly complex.

- Why do these projects constantly run over budget and over time?

- IT expects that the solutions which are built would never require any changes and as a result are bad in coping with these changes.

- IT clearly does not speak any business language.

IT upon its turn might say things about business such as:

- We always have to deliver in an unrealistic timeframe, business is too impatient.

- Business people can’t cope with all the technical constraints we have.

- Who is going to do the modeling anyway?

- Nobody in the business seems to have time to work out all the details in order for the BI solution to fully function in the way they want.

- Business is so unrealistic in their expectations. We can’t make everything work at once.

In other words: Business is from Mars, IT is from Venus.

Business wants speed, while IT requires stability; Business needs flexibility, where IT longs for control; Business depends upon changes, while IT lives by their standards; Business wants it in a short term, where IT only thinks long term.

Wouldn’t it be joyful if we could marry the best of both these worlds?

Agile BI defined

How would we then best define agile BI ? Maybe it is best to kick off by stating what agile BI is definitively not.

Currently the term agile in all its varieties - be it Scrum, Kanban, XP, freestyle or any other adopted framework for agile projects - is way overused and may we say "overhyped" as the solution to all problems.

Whenever the term agile is dropped, everybody involved assumes automatically that the success of the BI project is 100% guaranteed and that agile offers a silver bullet. Agile isn’t a synonym for plain easy. Agile does not mean that you do not need any planning. Nor does it mean that you don’t need to deliver any documentation.

Moving faster than within a waterfall approach, does not necessarily mean you are carrying an appropriate agile approach. Agile does not mean either that the typical software development cycle is simply to be abandoned. Neither does it mean that the choice for a robust architecture is completely delayed. To wrap up this section with a small anecdote, only recently I experienced the situation where two BI delivery companies were targeting the same customer.

The 1st company mentioned the term agile all over the place, but in practice wasn’t agile at all; they just did less of everything, whereas the 2ndcompany used a waterfall method, which in practice was tending more to an agile approach. The customer chose the first delivery company …

So what does agile BI stand for then?

Agile BI is a project approach which can rapidly and cost effectively adapt to meet changing business needs through true business-IT collaboration in a self-organizing manner and as such create business value through the early & frequent delivery of working BI software.

Agile BI enables a seamless collaboration between BI developers & BI users. It includes a rapid development methodology which solicits end-user input early and often and aims to reduce the time for building BI solutions. As such, Agile BI combines a set of processes, methods, tools & technologies in order to help BI users to be more flexible and more responsive to ever-changing business requirements. This flexibility is based on an ongoing scoping, rapid development iterations, evolving requirements, frequent testing and finally an ongoing and direct business/development communication.

The most significant themes within these characteristics are pointing to a BI project approach which should be able to respond in a flexible and fast way to changing requirements based on a tight collaboration with business. Changing in this perspective means: unknown, new & changed.

Waterfall issues

In order to be able to achieve agility it is necessary that the pure traditional waterfall approach is abandoned. A sheer waterfall approach has simply proven to be inefficient and overly expensive.

Within a waterfall approach it can take three, four up to six, seven or even more months to complete a BI project. Business people are only solicited during the analysis & user acceptance test phase – in other words at the beginning and at the end. During the design, build & technical test phases, which can take up to 70% of the complete project time, nearly no business input or feedback is sought.

Next to the absence of continuous business interaction, all requirements turn instantly into a kind of frozen state. During the analysis phase, which requires multiple consecutive weeks of business users attention, all (!) requirements need to be detailed in full even though most likely business users are unaware of what to expect from the final BI solution.

Instead the BI staff shows a bunch of data models and expects the business users to fully understand it from a purely theoretical visualization whether their needs are covered or not. When the BI solution is finally ready to be tested, again the business users need to set aside a considerable amount of time.

Potentially all changes, which typically pop up during the next weeks and months are easily transformed into change requests resulting only in going back to the drawing board. It’s even worse when certain initial requirements are no longer required, but are still being build, tested and implemented for no reason what so ever.

It is essential to realize that partial functionality in a few weeks is better than no functionality for several months. It would be way better to emphasize on smaller efforts which can be frequently reviewed through direct business feedback and as such delivering working software on a more regular basis.

As such, the risk of getting it wrong, the risk of delivering unwanted features or the risk of misinterpretation is sincerely reduced. By demo-ing through prototypes which are representative of the end solution, business users can immediately imagine what the functionality will look like in their standard BI tools. The preeminent fashion to capture business requirements is through defining user stories or so-called business questions.

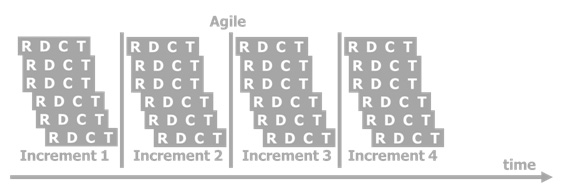

These user stories are gathered in a product backlog and are prioritized by the business users. The highest ranked user stories will be delivered within each new increment. In a certain way agile BI chops up the traditional waterfall software development cycle and/but makes sure that each increment is delivered in full. This implies that the most important user story as a whole is analyzed, designed, built and tested first - One after the other. At any given time business users could decide to stop the project when a satisfactory set of user stories has been delivered.

The questions here are: How small is small enough?

Shouldn’t a difference be made between front-end and back-end activities? And what about the BI architecture?

Agile in practice

Ideally a first increment should emphasize the following

- Assembly of an initial product backlog, prioritized by epic.

- A macro design based on the initial product backlog, such as types of database stages required (staging area, enterprise data warehouse, data mart, operational data stores, etc.), data mart types required (transactional, snapshot, etc.) data acquisition and integration approaches needed (information matrix, change data captures, batch processes, etc.) and the information delivery necessary (reports, cubes, etc.).

- The infrastructure on which to build the BI solutions and the BI tools must be ready.

Certain elements are for obvious reasons also present in a waterfall methodology.

It’s pretty clear that a data warehouse (including ETL & dimensional models) still has its place in an agile BI architecture. In another insight on this website (‘Is there still a need for a data warehouse?’) you can read all about the various data warehouse drivers such as a single version of the truth, data quality, complexity of transformations, historical views on data, integrating data from multiple sources and so on.

Now, how should the BI solution be cut into pieces, which then could each be tackled in an agile way?

Basically there are three main components in a BI environment:

- the dimensional model also referred to as star schemas & OLAP cubes which we refer to as ‘store’,

- the ETL and all associated preparation layers (e.g. landing zones, staging areas, etc.) which we refer to as ‘acquire’ and

- finally the front-end part consisting of reports, dashboards etc. which we refer to as ‘deliver’.

Let’s begin with the middle and most essential part, the store.

This is the most difficult one to tackle in an agile manner. To start with, in order to have input for creating a target data model or a presentation area, a backlog should be made available with the most relevant user stories and their associated business reasoning (i.e. why do you want this question to be answered?), these user stories should then be grouped by topic, whereas these topics most likely will equal certain information domains or subject areas as you will.

Each topic should then be prioritized and within each topic, each user story should be prioritized accordingly. Within this process the information matrix could be used to capture the most relevant dimensions & measures in context to certain user stories. In this process it is important that the business users execute the modeling together with the data architect – data modeling is way too important to leave it up to data modelers only :-) - and at the same time the business users will get a feeling early on what the final target data model would be capable of.

The information matrix upon its turn is then detailed further down through the means of a conceptual model which then, ideally automatically, is translated into a physical data model.

Kimball star schemas are not really optimized for handling changing or even plain new requirements. For example, a slowly changing dimension change from type 1 to type 2 requires the dimension and all its associated facts to be populated again. On the other side, the retention strategy for the sources in scope might mean that certain data will only be available for a relatively short period of time or furthermore that certain changes or deletes are lost on the spot.

Obviously, before the data gets into the final presentation layer it is advised to sub-store the data in a ‘ready-for-action’ stage, which could be an operational data store or an enterprise data warehouse, etc., a stage which is able to cope far better with these kind of challenges compared to a pure Kimball approach.

It is however vital that data and table/column structures can be added to this intermediate stage in an incremental way. For example, from a purely architectural perspective, it seems to make sense to create the entire data model upfront. However while the data model is being created, potentially certain business requirements might change and the resulting data model will not satisfy the new version of the business needs. That’s why we need to be able to analyze, design and build one subject at a time, one table at a time or one column at a time. By no means should this stage be a one shot only.

Once a dimension, a fact or a major part there off in the context of one or more user stories is finished from a data modeling design perspective, the ETL activities could also be tackled in an agile way. By dividing the increment into smaller bits and pieces a nimble modus can be reached. Remember that with each single increment business value should be created. If a complex customer dimension delivers that business value, then by all means the content of that increment could be just that, even without a related fact.

It should be possible to add by columns, by sources, by rows and by tables. For example in a 1st increment the data for a certain dimension is acquired from source A, whereas in the 2nd increment the data for the same dimension is sourced from source B.

For another dimension, in a 1st increment a certain subset of the data (e.g. a certain geographic region or a certain customer subtype …) is sourced from source A and in a 2nd increment the remaining subset is assembled further.

And finally in a 1st increment the data is made available in a non-historical manner and in a 2nd increment the data is made available in a correct historical fashion.

That leaves us with the delivery part. Ideally all base & derived fields which are required for a certain report are identified together in a previous or in the current increment. However each report - report by report - can be created in an agile fashion, without any detailed functional specification upfront. Needless to say, since all fields are by now already present in the underlying data model. In a way, simple SQL-browser tools could be used here to create report prototypes in order to speed things up – but there is no substitute for the final BI-tool as mentioned earlier. Reports & dashboards which require information from multiple star schemas however, can only be tackled when all pre-conditions have been met and should be move to a next increment.

All three major components (store, acquire & deliver) could be mutually grouped by star schema instead of subject area or group of subject areas. But then again, it could be that business value even exists in delivering only a part of a star schema.

Within any component it is crucial that representative data is foreseen in supporting better business feedback. For that reason, each smallest component should be accompanied by its’ fair share of data profiling in order to make things more tangible.

Obviously, also in an agile mode, it is considerably important to involve well-defined templates, common standards and a minimum, yet essential documentation set.

Regardless of the delivery method used, whether it is waterfall or agile, documentation must be produced. User guides, source-2-target mappings, data models, testing documents to name but a few. Each of these documents will be noted down as a user story by itself in such a way that time can be set aside for these tasks. No time is wasted on documents that deliver no value to the project.

Compare this approach to a classic waterfall methodology and you will find your BI team spending weeks writing out large requirements documents with lots of detail. While these documents may look impressive, the time to create them is not well spent, since the requirements might have changed before the documents can be read by the business users.

Automation

In being flexible and responding fast to new & changing BI requirements, it is of great importance to seek for automation on those processes which are done more than just once.

A fully automated BI solution, from sources up to reports, including all related activities is not yet here. However certain parts can already be automated by using data warehouse automation tools. Most of the tools in this space use metadata in an intelligent way to automatically generate a variety of data warehousing structures. The idea is that once these tools have been configured, that a button is pushed and that the tool automatically generates key elements within a BI environment, such as reports, semantic layers, star schemas, ETL transformations, and so on.

Because of the nature of these tools, they can be used to rapidly prototype BI solutions and get user feedback quickly. This functionality facilitates faster development, improves business user satisfaction and finally buy-in to the final solution.

Be aware that automation also might include support for prototyping, test-data generation & automated testing & documentation, data lineage & impact analysis. It should also be taken into account that the 80-20 rule also applies here. For example, 80% of all ETL work will relate to low complex jobs, while 20% will be related to medium to high complex jobs. How much of the 20% can be handled in an automated way in the first place? How much of the 20% can be handled by a data warehouse automation tool?

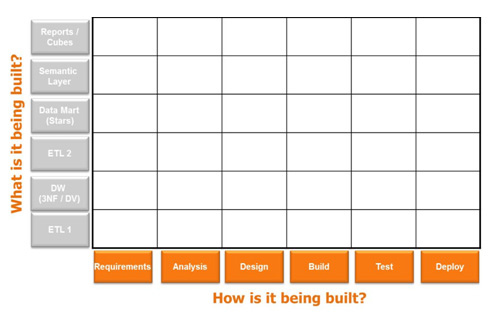

Various new BI vendors are popping up in this data warehouse automation space. To name but a few: BIReady, TimeXtender & WhereScape. It is however crucial to understand exactly their automation abilities and disabilities. In order to correctly weigh these functionalities the following matrix could be used:

This matrix will help in evaluating potential data warehouse automation tools.

The vertical axis refers to the data warehouse layers which could be generated. From bottom to top, this includes ETL activities between sources & the optional enterprise data warehouse, the optional enterprise data warehouse layer itself, the ETL activities between the optional enterprise data warehouse and the star schemas, the semantic layer within a BI tool (e.g. IBM Cognos Framework or SAP BusinessObjects Universe) and finally the reports, dashboards and cubes.

The horizontal axis refers to the process side. Can a tool, which is able to generate star schemas, offer extensive support in capturing requirements, in analyzing & profiling source data, in design, in build & testing the BI solution?

Agile risks

As with any methodology, there are certain risks associated to agile BI:

- Is agile BI suited to any organization?

- Is the organization ready – does it have an agile mindset?

- Has a BI program been defined or does it only concern a one off initiative?

- What’s the level of BI maturity within the organization?

- What’s the level of BI experience of the business users?

- What’s the level of experience with BI of the BI technical staff?

- Is agile BI suited to any given BI team?

- Is agile BI suited for very small teams?

- Is a successful BI project methodology already in place?

- Is the data warehouse solution build from scratch or is it about maintenance and change on an existing solution only?

- Does business got the time and willingness to spend it with BI staff?

- Early decisions may be proven to be wrong, when new information is captured (i.e. "if we only would have known earlier”).

- Requirements which are never / not final offer a business opportunity, but at the same time they pose a potential threat to the stability of a BI solution.

- Project scope creep from a time perspective – how many increments does it take?

- Agile does not equal no documentation, agile does not equal to no design – do not expect any miracles from that perspective from any data warehouse automation tool

- Agile is not about putting patches on patches. E.g. be extremely careful in terms of semantic layers.

- How do we fit data quality problems within an agile framework which are most often unforeseen and anyway way bigger than initially could be expected.

- ETL complexity – how difficult will the integration of all required data be and how difficult are the associated transformations? After all BI applications could be seen as separate and independent pieces of software, collectively architected ETL processes are not, which makes the ETL architecture potentially extremely complex.

- How much change is expected in requirements? 5%? 50%?

- Are our current BI tools up to the agile challenges?

- Agile BI has its value within the initial creation, but also in changing the BI solution at a later stage. However changing a BI solution at a later stage means that the solution is already in use and that it is populated with real data, which potentially needs to be historically correct. Are data warehouse automation tools up to this challenge?

- Regression testing needs to happen over & over, even though early iterations only have limited value.

Conclusion

In 1966 a clear distinction existed between the good and the bad in the same named epic western movie. In terms of agile BI & BI using a waterfall approach things are equally distinctive. When we think about a business intelligence project, it is a business problem that requires both a business and technology solution. The business processes, data & requirements still need to be studied, resulting in the same amount of time, unless the requirements change ...

Agile doesn’t only mean "faster & faster”. It is also about doing things differently.

Not just building things quickly, but building the right things quickly.