What is a Data Lake?

Data Lake is a file-based system that allows to store both structured and unstructured data. All the raw data coming from different sources can be stored in a data lake without pre-defining a schema for it. Unlike a data warehouse where the data is first processed and structured based on some business needs before entering the data warehouse, the data lake can contain all types of data from many different sources without processing it first or worrying about the later use.

Data Lakes are very agile storage systems where users have all the flexibility of how they want to store the data. Moreover, the data stored in a data lake is very easy to process because it can be accessed from many different processing engines and at the same time having the possibility to leverage the parallel computing for it.

We need data lakes in order to store all types of data coming from different sources in a cheap, scalable and easy-to-process way.

To avoid creating a storage where all the data is just dumped into it and then having the difficulty of accessing it or even finding it later it is important that the data is well organized across the data lake.

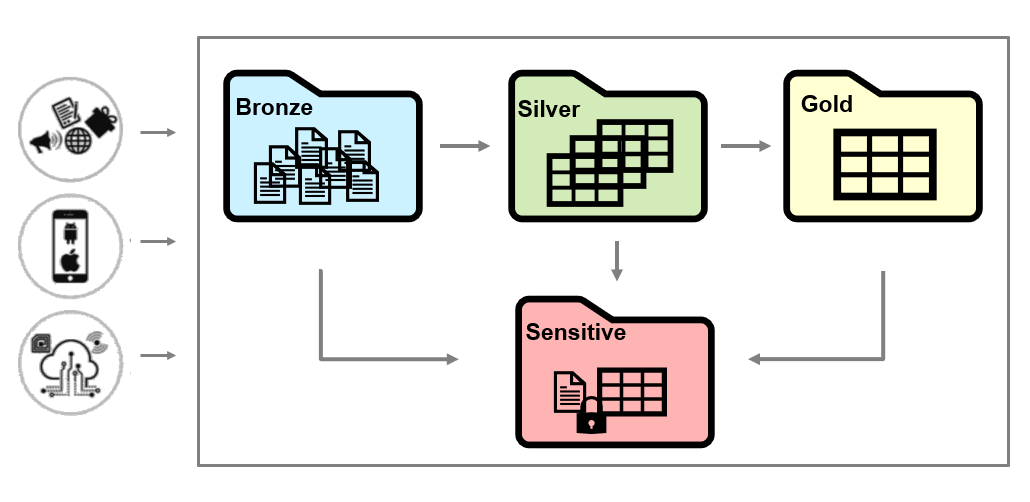

It is a best practice to organize your data lakes in different zones:

- Bronze zone - keeps the raw data coming directly from the ingesting sources

- Silver zone – keeps clean, filtered and augmented data

- Gold zone - keeps the business value data

- Sensitive zone – keeps sensitive data and users have restricted access to this data

What is Delta Lake?

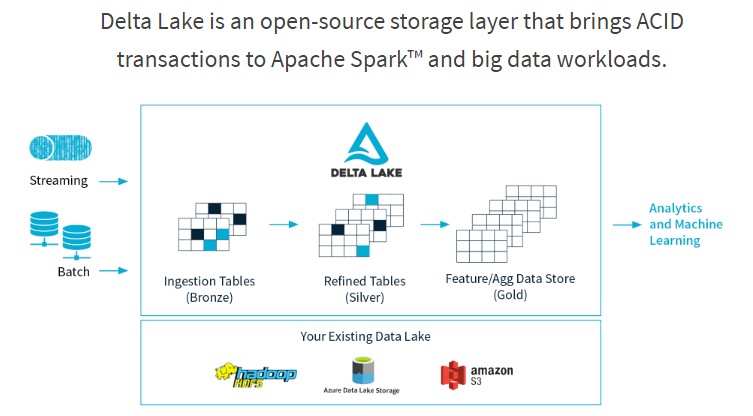

Data lakes typically have multiple data pipelines reading and writing data concurrently. It's hard to keep data integrity due to lack of how Spark and generally big data works (distributed writes, jobs taking a long time). Delta lake is a new Spark functionality released to solve this.

Delta lake is an open-source storage layer from Spark which runs on top of an existing data lake (Azure Data Lake Store, Amazon S3 etc.). Its core functionalities bring reliability to the big data lakes by ensuring data integrity with ACID transactions while at the same time, allowing reading and writing from/to same directory/table. ACID stands for Atomicity, Consistency, Isolation and Durability.

- Atomicity: Delta Lake can guarantee atomicity by providing a transaction log where every fully completed operation is recorded, and if the operation was not successful it would not be recorded. This property can ensure that no data is partially written which can then result in inconsistent or corrupted data.

- Consistency: With a serializable isolation of write, data is available for read and the user can see consistent data.

- Isolation: Delta Lake allows for concurrent writes to table resulting in a delta table same as if all the write operations were done one after another (isolated).

- Durability: Writing the data directly to a disk makes the data available even in case of a failure. With this Delta Lake also satisfies the durability property.

Azure Databricks has integrated the open-source Delta Lake into their managed Databricks service making it directly available to its users.

Why do we need Delta?

With the rise of big data, data lakes became a popular choice for storing the data for a large number of organizations. Despite the pros of data lakes, a variety of challenges arises with the increased amount of data stored in one data lake.

| Data Lake Challenges | Solution with Delta Lake |

|---|---|

|

Writing Unsafe Data If a big ETL job fails while writing to a data lake it causes the data to be partially written or corrupted which highly affects the data quality |

ACID transactions With Delta, we can guarantee that a write operation either finishes completely or not at all which avoids corrupted data to be written |

|

No consistency in data when mixing batch and streaming Developers need to write business logic separately into a streaming and batch pipeline using different technologies Additionally, there is no possibility to have concurrent jobs reading and writing from/to the same data |

Unified batch and stream sources and sinks With Delta, the same functions can be applied to both batch and streaming data and with any change in the business logic we can guarantee that the data is consistent in both sinks. Delta also allows to read consistent data while at the same time new data is being ingested |

|

Schema mismatch We all know incoming data can change over time. In a classical Data Lake, it will likely result in data type compatibility issues, corrupted data entering your data lake etc. |

Schema enforcement With Delta, a different schema in incoming data can be prevented from entering the table to avoid corrupting the data Schema evolution If enforcement isn’t needed, users can easily change the schema of the data to intentionally adapt to the data changing over time |

|

No data versioning In a Data lake, data is constantly modified so if a data scientist wants to reproduce an experiment with the same parameters from a week ago it would not be possible unless data is copied multiple times |

Time travel With Delta, users can go back to an older version of data for experiment reproduction, fixing wrong updates/deletes or other transformations that resulted in bad data, audit data etc. |

|

Metadata management With all the different types of data stored in data lake and the transformations applied on the data it’s hard to keep track of what is stored in the data lake or how this data is transformed |

Metadata handling With Delta, we can use the transaction log users to see metadata about all the changes that were applied on the data |

Our recommendation

element61 believes Delta features create a big opportunity for anyone starting with a Data Lake or already having a Data Lake. Delta is an easy-to-plug layer which we can plug on top a Azure Data Lake which allow us to offer you true streaming analytics and big data handling while having all benefits such as time travel, metadata handling, and ACID transaction.

Continue reading...

Discover more on Azure Databricks and Delta through below reads:

Contact us if you have more questions on Delta and how to get started with your Data Lake.