That the world of data science and machine learning is changing every day, is nothing new. We, as data scientists, need to keep up the pace with these emerging trends. The increasing demand for data science projects results in an expanding supply of data science tools. They all try to offer the best performance with an ease-of-use so that even a beginning data scientist can get started. However, are these tools ready to be used, and even more important can these tools deliver value to you and your company?

Often it is said that 80% of the time of a data science project is spent on data preprocessing. However, every data scientist knows that the optimization of your model, although often not that code-intensive is also very time-consuming. After some changes in the selected features, some cross-validation and some new algorithm try-outs, you all of a sudden end up at iteration 37 and counting. Then the next data science project comes along and, although you could say your knowledge about modeling and cross-validation enlarged since the previous project, it takes a similar number of iterations or even more. Quite surprising since the modeling phase is often not that project-specific. So why not automate this part of the data science project?

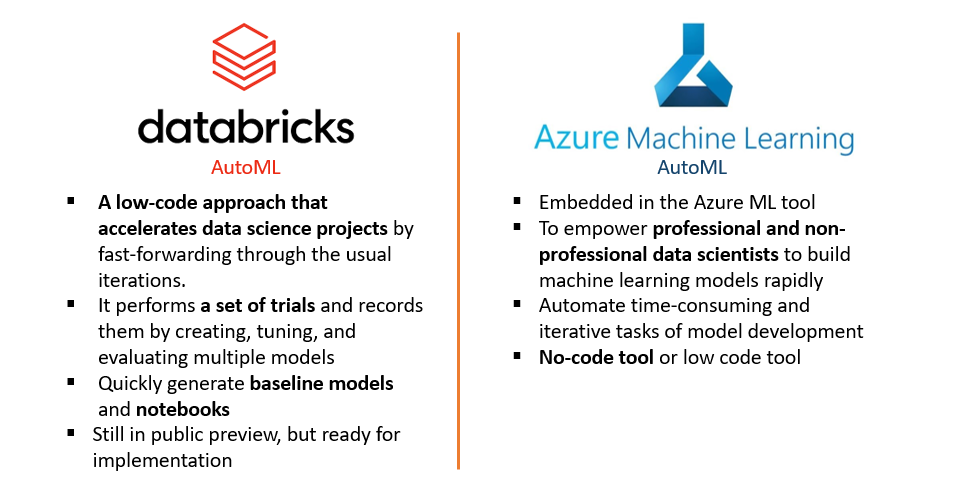

In this article, I will discuss the advantages and downsides of some automated machine learning tools and in which cases you can employ these. Since we most often work with Databricks and Azure, two tools are within our reach: Azure Automated ML and Databricks AutoML. Do not be mistaken, a lot of other tools co-exist. However, an important consideration before choosing a data science tool is the fit with the other data tools and systems used for the project.

What is automated ML and what are automated ML tools?

Automated machine learning, also referred to as automated ML or AutoML, is the process of automating the time-consuming, iterative tasks of machine learning model development. It allows data scientists, analysts, and developers to build ML models with high scale, efficiency, and productivity all while sustaining model quality.

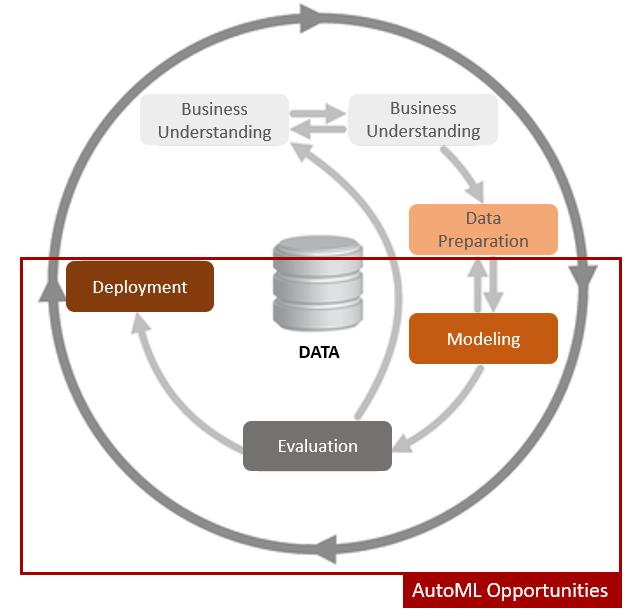

Automated ML in the CRISP-DM model

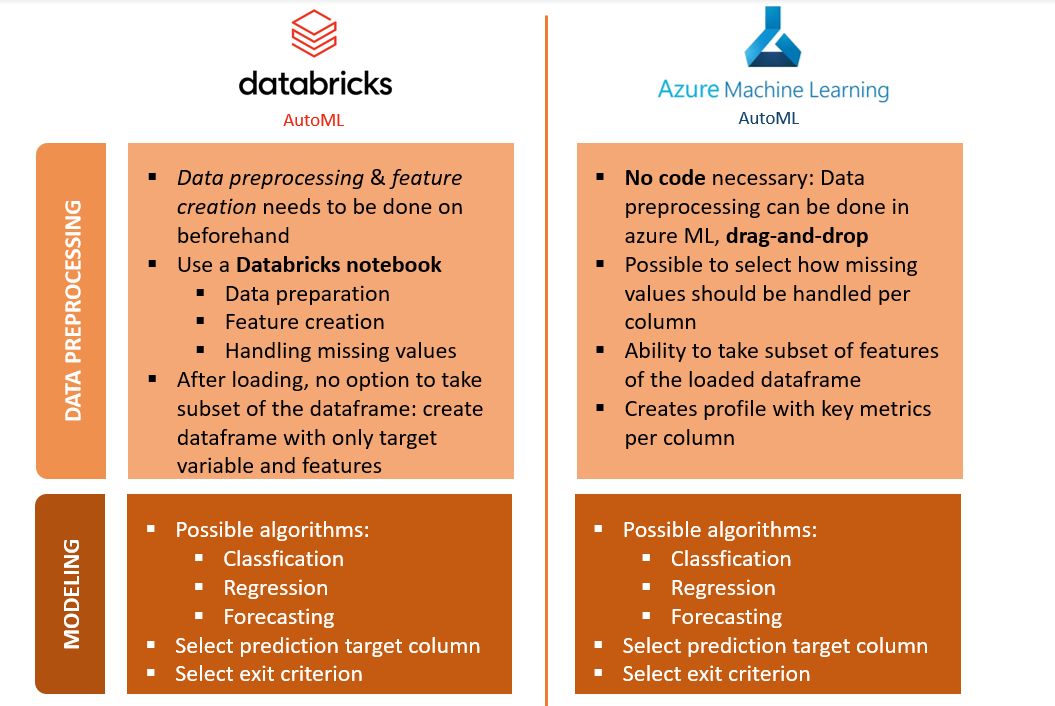

Let us walk through the different steps of a data science project and have a look at how these two automated machine learning tools could optimize our time spent on the project, but even more importantly how they might optimize our models. We will discuss the data science project steps by means of the CRISP-DM model. The first step is business and data understanding. Since this is not changed by the process of automated ML, we will directly start at the data preprocessing step.

Conclusion

While the advantages of automated ML have proven to be numerous and knowing that this most certainly will become a subprocess of a data science project, we should stay cautious when implementing these tools. As the data quality, in reality, is often worse than expected beforehand, it is necessary to have a good understanding of which data cleaning and preprocessing steps are executed and more importantly of the motivation for these transformations. Hence, data quality and interpretability of models still form barriers, which make it impossible to automate a data science project in its totality up until today. This, however, should not be a reason to put these tools aside, but an incentive to integrate these tools in your way of working where they can create value for you and your company. This can be to verify the predictive power of the dataset or to get a baseline model to guide project direction. However, we should acknowledge that the AutoML tools can only create the most optimal models given the features. In other words when your features are wrongly created or contain too little predictive value, your model’s performance will be negatively influenced by this.

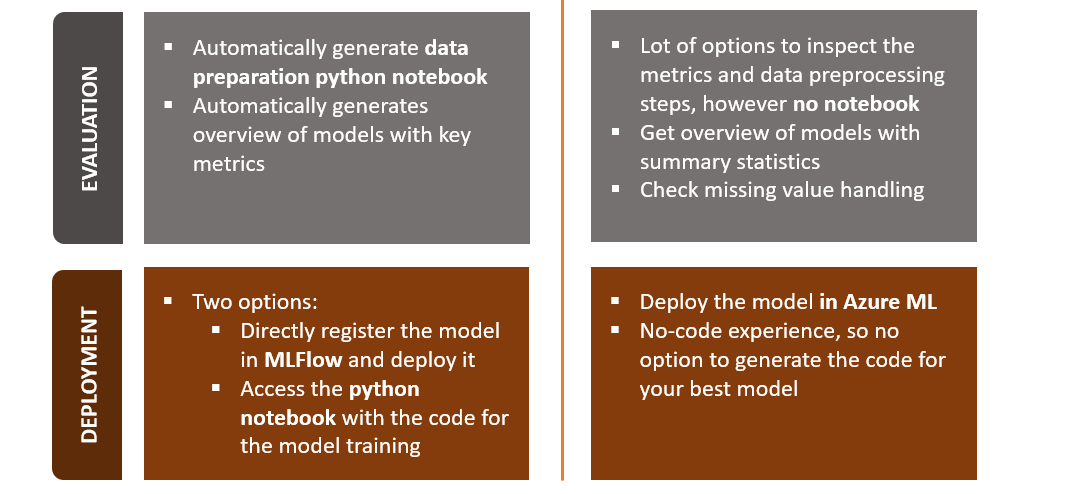

Whether to use Azure auto ML or Databricks automated ML merely depends on how good your coding skills are since the modeling phase is quite similar. My preferred automated ML tool is Databricks AutoML since it is less black box when I can do the preprocessing of the data myself. Moreover, the integration of MLFlow is an undisputable advantage. Furthermore, the generation of the notebook gives more options in terms of making small changes to the model depending on the business input.

Sources:

- What is AutoML?

- What is automated machine learning (AutoML)?

- https://azure.microsoft.com/en-us/services/machine-learning/automatedml/#features