On 13 May 2020, the NYC Apache Airflow Meetup hosted a virtual event entitled “What’s coming in Airflow 2.0”. Being big fans of Airflow at element61, we were curious to find out what changes are to be expected in this long-awaited Airflow version.

In this article, we will provide a high level summary of the changes that were discussed, focussing on the changes that are most relevant to the people building, maintaining and following up on DAG runs, less so on the setup of Airflow itself.

Useful links:

- If you're new to Airflow or looking for more information, the Airflow website was recently restyled and should be a great starting point.

- In a previous post, we compared Airflow and Data Factory

DAG Serialization

Previously, both the Airflow webserver and scheduler needed to have access to the DAG files. Both of these Airflow components would then actively read and parse the DAG files.

DAG serialization refers to the process of storing a serialized (i.e. json) representation of the DAGs in the database. When enabled, the scheduler will take care of parsing the DAG files and store a representation in the database. This representation can then be fetched by the webserver to populate the user interface.

The advantages are twofold:

- The webserver doesn’t need to be able to access the DAG files. This is simplifying the Airflow setup and the deployment of DAGs.

- The load on the webserver is reduced as the serialized DAGs are retrieved from the database instead of being parsed from the DAG files.

Note: DAG serialization is already available in the latest version of Airflow, where the scheduler takes up this task. In Airflow 2.0, DAG parsing & serialization will most likely be done by a separate component: ‘serializer’ or ‘DAG parser’ were two of the suggestions, although the exact name is still to be confirmed.

DAG versioning

Currently, adding tasks to an existing DAG has the side effect of introducing “no-status”-tasks in the historic overview. DAG versioning will be introduced to overcome this inconsistency. From the presentation, it was not exactly clear how this would look like, but we are definitely looking forward to this features.

Versioning is already well incorporated into the data science world with regards to code (e.g. git) and in rapid progress for data (e.g. delta). Having Airflow DAGs versioned seems like a great addition to that and a another great step towards consistent and clear flows of data.

Scheduler improvements

The Airflow scheduler, responsible for picking up and distributing tasks over the various workers, is one of the core components in Airflow. With the current implementation of the scheduler, users can experience a slight delay in tasks being picked up due to the overhead when switching from tasks. This is especially bothersome when Airflow is processing a lot of small tasks and has been a thorn in the side for many Airflow users & contributors.

The changes in Airflow 2.0 are not only aiming to reduce these delays, but also to make it possible to run multiple schedulers at once. Horizontal scaling of the scheduler – i.e. running multiple schedulers at once – will allow users to obtain a highly available Airflow setup and reduce the risk of tasks being missed.

REST API

Airflow 2.0 will feature a complete REST API that follows the Open API 3.0 specifications.

This major feature would not only allow Airflow to integrate with external components, but we are specifically looking forward to use this also to administer users & connections.

Functional definition of DAGs

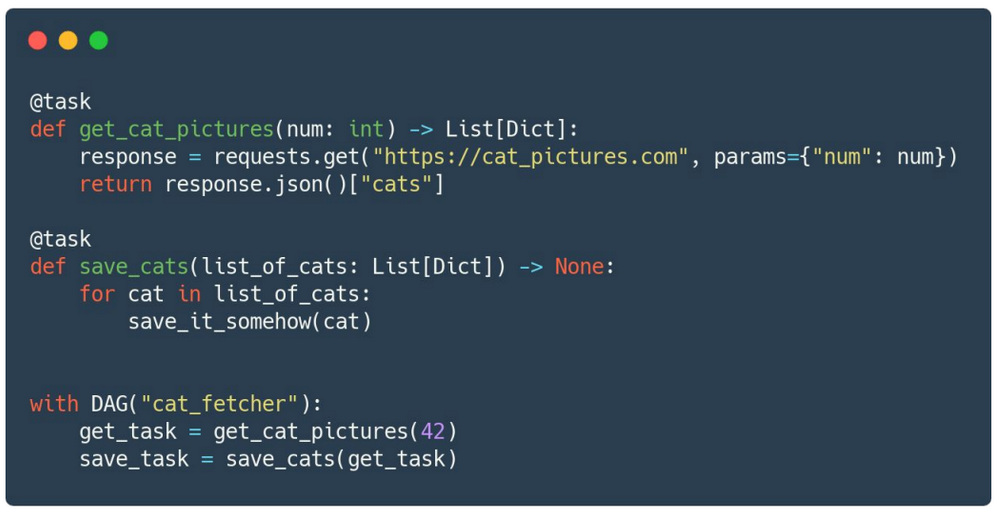

The functional DAG definition, described in AIP-31*, will reduce the need for boilerplate code when working with the PythonOperator. Instead of wrapping the PythonOperator around a function, users will be able to use a decorator – i.e. the “@task” in the code snippet below - to define python tasks.

This modification allows for a more concise DAG definition, but also offers the possibility to pass one Airflow task as an argument in the next, leading to a functional definition of a DAG. The snippet below illustrates this very well.

Others

Discussing all the changes that were announced would lead us too far. Nevertheless, there are some additional improvements we would like to mention:

- The RBAC UI will become the default and only possible UI.

- Using Airflow with KEDA (Kubernetes Event Driven Autocaler) allows for a more scalable setup when running Airflow on Kubernetes.

- Airflow 2.0 is expected to come with an official Helm chart.

When

To cite one of the presenters: “When it is ready”. Q3 2020 was mentioned as the aimed for timeline, but we’ll have to see how development progresses over the coming weeks and months.

Talking about the coming months, the first Airflow Summit was announced to take place in July 2020. The global pandemic has lead the organizers to make it a fully virtual & free event, very much looking forward to following the sessions and keeping you posted on the most interesting talks.

Thanks to the community

We would like to take this opportunity to thank all Airflow contributors for their work on this great project.

element61 is looking forward to extend the usage and knowledge of Airflow and one day take the leap of contributing to the project ourselves.

More specific questions on Airflow and how it can help you organize your data processing? Get in touch!

Continue Reading on Airflow

- Read our insight on Azure Data Factory & Airflow: mutually exclusive or complementary?