Warning: Do not try this at the office!

A complaint often heard about SAP BW systems is that they are too fast :-). Loading data as well as running reports happens in a blink of an eye leaving SAP BW administrators and report users with almost no time to get a cup of coffee or have a chat with their favorite colleague.

In this insight, we list some important "Tips & Tricks" if you want to slow down performance, or alternatively, things to watch out for if you want to tackle slow performance ...

Exploding dimensions

In SAP BW, InfoCubes are the main objects on which reports are run. These InfoCubes consist of a central fact table surrounded by several dimension tables. This layout is called the SAP BW star schema and is derived from the classic star schema, also known as the ‘snowflake’ schema. In the SAP BW star schema, the facts in the fact table refer the key figures and the dimension attributes to characteristics.

Image 1: The SAP BW star schema

When end user reports simply deliver the data too fast, it might be a good idea to make sure that the relative size of the dimension tables compared to the size of the fact table exceeds 10%. In other words, you want your dimensions to explode! The bigger they are, the more time will be required to join them with the fact table and produce the results in your report. So, for instant performance deterioration, start shuffling around the InfoObjects in such a way that the dimension tables can grow in size far beyond the 10% of the size of the fact table. To help you with this task, we refer to the following paper on the element61 website: ‘Multi-dimensional modeling in SAP BW: art or science?’.

Image 2: Line item dimension

Sometimes, dimensions can be extremely large by nature. For instance, any dimension holding document numbers, has the potential to become huge. SAP BW provides the possibility to identify such a dimension as a ‘line item dimension’. This means that the dimension only contains one InfoObject (for example: Document number) and that no physical dimension table is generated, instead, the fact table is directly linked to the SID table of this InfoObject. This way, no dimension IDs need to be generated during data loading and one table join can be avoided when this ‘line item characteristic’ is dragged into a report leading to increased performance in both backend and frontend. Therefore, uncheck this option and slow BW down!

Favor formulas

Transformations between SAP objects are a powerful tool to slow down data loads. When mapping one or more source fields onto a target field, SAP BW lets you choose from a list of rule types in order to determine how the target field will be calculated. One of these rule types is an absolute performance killer: the Formula.

Image 3: Transformation rule type

The reason why formulas contribute to performance deterioration can be found in the overhead that is needed to be able to define formulas in a readable and user friendly manner. When loading data through the transformation, this formula needs to be translated to ABAP. Since formulas are mainly used in field transformations, this translation to ABAP needs to be carried out over and over again (once for each record). Therefore, you should use formulas whenever you can. You should certainly not use routines to get the same result and you could even avoid ‘Direct Assignments’ by replacing them with formulas.

Image 4: Formula vs routine

Since quite often target fields are to be calculated by adding, subtracting, dividing or multiplying values from the source, significant performance loss can be attained by doing all these calculations using formulas.

DSO activation

Always use standard DSO, don’t use write-optimized DSO.

At the database level, a standard DSO consists of 3 transparent tables:

- Activation queue

- Active data table

- Change log

The activation queue holds new data records that have not yet been activated. Once activation has taken place, the data is deleted from the activation queue. The active data can now be found in the active data table where it was aggregated based on the semantic key of the DSO. The key figures may have been overwritten or added depending on the setting in the transformation. The change log contains the change history for the delta update from the DSO into other data targets, such as other DSOs or InfoCubes. Both the activation queue and the change log have a technical key that consists of:

- Request ID (load request or activation request)

- Package ID

- Record Number

Image 5: Standard DSO

When the data in a standard DSO is activated SIDs will be generated. A Surrogate ID (SID) is an integer number which is assigned to each master data value. These SIDs are stored in separate tables called SID tables. For each value entering the active data table of the DSO, SAP BW will check the SID table of the InfoObject into which the value will be written. If no existing value is found, the system will generate a new SID. The generation of SIDs upon activation is the default setting for each new standard DSO created in SAP BW.

Image 6: Default settings for standard DSO

It goes without saying that this process of activation can be quite time consuming. That is why the staging layer of your SAP BW system should be built using as many standard DSOs as possible to achieve sub optimal performance.

Unfortunately, in many cases, the staging layer is built using the highly efficient Write-Optimized DSOs. In the staging layer, raw data coming from the source system is persistently stored. Since no reporting should ever take place on objects in this layer and no complex transformations are needed yet, it makes sense to use an object that allows for efficient data writing. That is why in well performing SAP BW systems standard DSOs are avoided in this layer.

Image 7: Changing the Type of DataStore Object to Write-Optimized

Write-Optimized DSOs were designed to load data in a highly efficient way, without any activation or SID generation. They do not have the 3 transparent tables that make up a standard DSO. Instead, they only have an active table. This type of DSO can be seen as a place where all available source system data is dumped in its rawest form.

However, not all is lost when you encounter efficient Write-Optimized DSOs in the staging area. Since they are only efficient when it comes to data loading, but highly inefficient for querying, you could seize this opportunity to build a few incredibly slow reports on these DSOs. End user frustration is guaranteed.

Database reads

As a rule of thumb one could say that database reads are slow whereas internal table reads are fast.

Therefore the abundant use of ‘select’ statements on a database table will have a hugely negative impact on the system’s performance. Avoid replacing these time consuming database reads by fast internal table reads.

When loading data from PSA to DSO, DSO to DSO, DSO to InfoCube, etc. the data is being pushed through a transformation. Within this transformation a bunch of rules will determine how the data from the source object will be transformed into the resulting data of the target object. Several types of transformation rules exist as previously shown in image 3. One of these transformation rule types can be particularly useful for generating a flood of database reads: the routine! For this transformation rule type a number of input fields can be selected from the source of the date (PSA, DSO,… ). The resulting value is calculated based on the input values using ABAP coding. This piece of ABAP coding will be executed for each and every record in the source package. Imagine you were to include a ‘select’ statement on a database table to look up a value based on the input values in that piece of ABAP coding. For a source package size of 50.000 records, this would generate an impressive 50.000 database reads.

In over performing systems, these 50.000 slow ‘selects’ have most likely been replaced by 1 slow ‘select’ and 50.000 fast internal table reads. In the start routine of the transformation, all the data needed for the lookup is read from the database table in 1 go and poured into an internal table. Later on, in the transformation routine, this internal table is read for the lookup. This also happens once for each record in the source package.

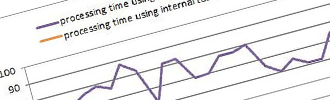

Image 8 illustrates the performance gains for the previously described lookup scenario. As an experiment, 50.000 lookups have been executed and timed using both strategies. This experiment was repeated 25 times. As can be seen on the chart, doing the 50.000 lookups using 50.000 database reads takes about 70 seconds on average. The same result can be achieved in about 6,5 seconds using one database read to fill an internal table followed by 50.000 internal table reads. This means that using an internal table is 11 times faster than repeatedly reading the database.

Image 8: Database reads vs. internal table reads

On the flip side

Of course, if you need a fast and reliable SAP Business Warehouse system we recommend you do the exact opposite of the suggestions above.